Agentic Workflows Club

AI 101

A visual guide for working professionals

The arc of human tools

Every major leap in civilization came from a tool that amplified what one person could do. Understanding AI starts with seeing where it sits in that longer arc.

1.7 mya

1.7 mya 1440 CE

1440 CE 1969 CE

1969 CE 2022 CE

2022 CE 2025 CE

2025 CE 2026 CE

2026 CE 2026 CE

2026 CEThe hand axe was the first deliberately shaped tool. Proof that our ancestors could hold an idea in mind and impose it on the world. Every tool since has extended that same impulse.

The printing press made ideas reproducible. Literacy was no longer reserved for clergy and nobility. For the first time, ideas could compete on merit, creating network effects for knowledge itself. The Reformation, the Scientific Revolution, the Enlightenment. All downstream of one machine.

The internet collapsed distance. Four computers in 1969 became four billion people online by 2020. Knowledge was no longer just reproducible. It was searchable, instant, and participatory. Anyone could publish. Anyone could find it.

In 2017, eight researchers at Google published a paper called Attention Is All You Need. It described a new architecture for processing language. Within five years it would power every major AI system on earth.

ChatGPT reached 100M users in 2 months. TikTok did it in 9 and Instagram in 28 months before that, holding the crown for the fastest growing consumer app in history. AI had arrived.

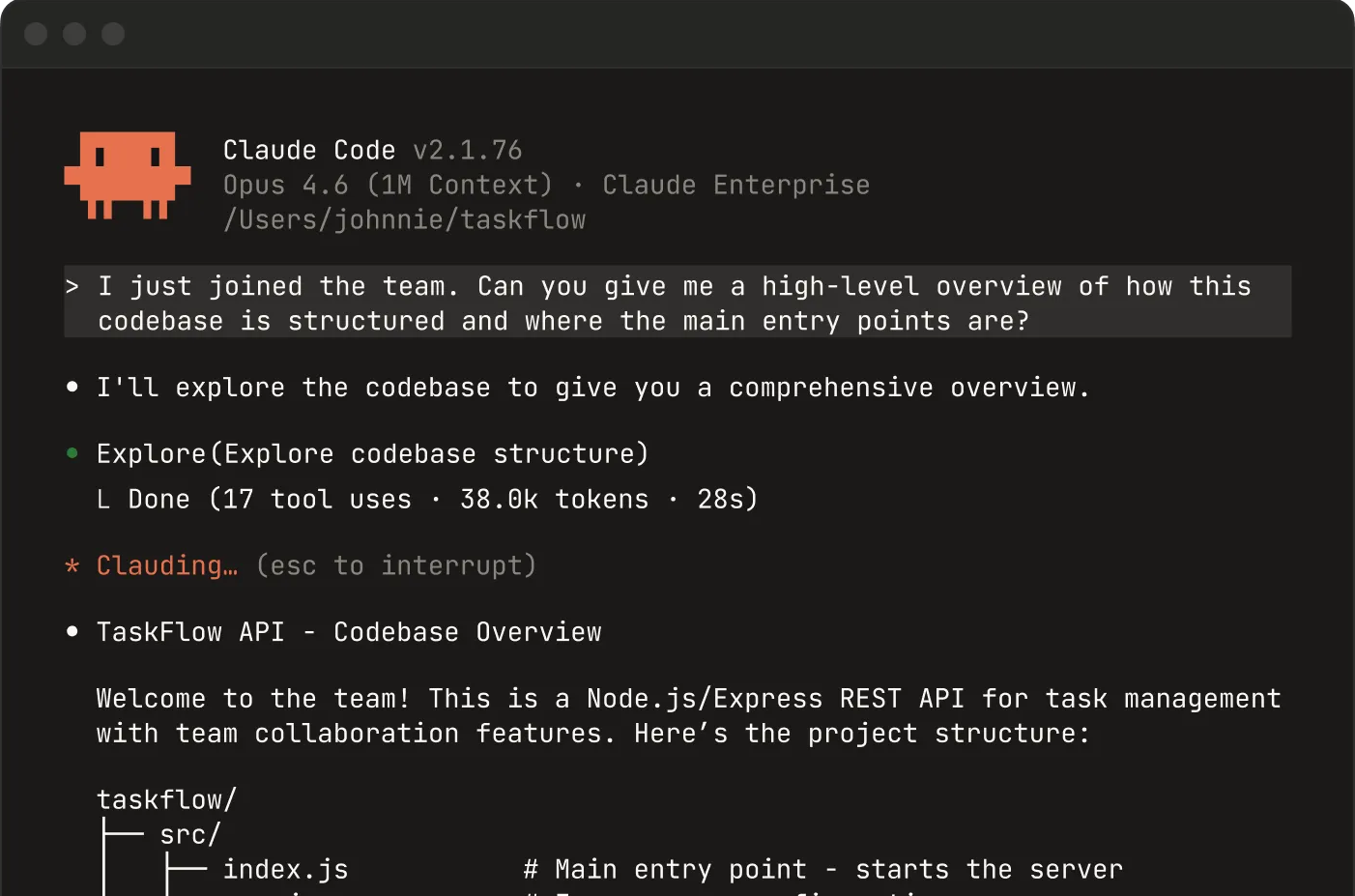

AI stopped answering questions and started doing work. Claude Code reads codebases, runs commands, creates files, and debugs. Full autonomous loops. Within 9 months Anthropic hit $2.5B in annualized revenue. The fastest revenue growth in enterprise software history.

When his dog Rosie was diagnosed with cancer, Aussie Paul Conyngham refused to accept the prognosis. Using ChatGPT, Gemini, and Grok he designed a bioinformatics pipeline, identified mutations in Rosie's DNA, and architected a personalised mRNA cancer vaccine. Three months into treatment, Rosie's tumours were shrinking. He did this with no background in medicine.

The chatbots empowered me as an individual to act with the power of a research institute - planning, education, troubleshooting, compliance, and yes, real scientific design work in converting genomic data to a vaccine prescription. But they worked alongside humans at every step. The combination is what made it possible.Paul Conyngham

So how does it actually work?

Every tool we've seen amplified something humans already do. AI amplifies language. To understand what it can and can't do, we need to see how it processes words.

To generate text, LLMs must first translate words into a language they understand.

First a block of words is broken into tokens, the basic units that can be encoded.

The model learns meaning by observing which words appear near each other in training data.

From these patterns the model learns to predict the most plausible next word. It doesn't retrieve facts. It generates whatever continuation fits the pattern best.

The model doesn't know facts. It predicts what text comes next based on patterns. When the patterns are strong, the output is usually right. When they're weak or ambiguous, the model doesn't say 'I don't know.' It guesses confidently.

This is called 'hallucination.' Without source documents, models get factual questions wrong 30-60% of the time. But when grounded with retrieved documents, told to use only those sources, error rates drop to near zero. The risk is not that AI hallucinates. It is that AI gets deployed without grounding.

A 2025 peer-reviewed study tested this directly. Cancer information queries were run with and without source documents. Without grounding, both models hallucinated over a third of the time. With curated source documents, GPT-4's hallucination rate dropped to zero, partly because models refuse to answer when they lack grounding information.

Not all languages are equal to AI

Tokenization is not neutral. The languages with the most training data get the cleanest tokens and the best performance. For everyone else, the same work costs more and works worse.

"We enhance human agency" - the same phrase that powered our earlier example. In Swahili: Tunaimarisha uwakala wa binadamu.

English tokenizes cleanly into 4 tokens. Swahili fragments into 9, more than double. Same meaning, but the model sees broken shards instead of whole words.

The pattern holds across languages. Notice that the worst-performing languages are overwhelmingly from the Global South, because they are least represented on the internet, the primary source of pre-training data. More fragmentation means higher cost, less context window, and worse performance. Exactly where it matters most.

This isn't just a technical curiosity. For a health chatbot serving rural Kenya, the same service costs 2.3x more in Swahili than English, exhausts the context window faster, and produces measurably worse results. The communities with the greatest need face the worst performance.

From autocomplete to assistant

A raw prediction engine is not what you interact with when you use ChatGPT or Claude. After pre-training, models go through additional stages that transform them from text completers into tools that can follow instructions, and know when to refuse.

A freshly pre-trained model is just a prediction engine. Ask it a medical question and it completes the text, but it doesn't actually answer you. It has no concept of "helping."

Instruction tuning is the first round of post-training. Engineers show the model thousands of example conversations (question and ideal answer) until it learns to follow directions instead of just completing text. Now it answers questions, summarises documents, and writes code. But it has no sense of what it shouldn't do.

RLHF (reinforcement learning from human feedback) is the safety layer. Human reviewers rank the model's outputs from best to worst, and the model learns to prefer responses that are helpful, honest, and harmless. This is what teaches it to hedge on medical advice, refuse harmful requests, and say "I don't know." Without it, the model is capable but reckless.

But that alignment isn't distributed equally.

Safety alignment was trained primarily on English data, by English-speaking reviewers, for Western use cases. The model may refuse a harmful request in English but comply in Swahili. It may hedge on US medical advice but not on advice about tropical diseases.

That safety layer doesn't build itself. It requires thousands of data workers reading, labeling, ranking, and flagging, often for less than $2 an hour. Most are in Kenya, the Philippines, and Venezuela. The communities most underserved by AI are the same ones doing the labor to make it safe.

How do you know if it works?

A model that aces a benchmark can still fail in the field. Evaluation is the discipline of figuring out when that will happen, before it reaches the people it's meant to serve.

We see evaluation as having four levels. Level 1 asks whether the model behaves correctly. Level 4 asks whether it improves people's lives. Most AI proposals only show you Level 1.

This is what Level 1 looks like up close. Each test item is a prompt, a model response, and a judgment: did it get the facts right? Did it hallucinate? Did it refuse a dangerous request?

Levels 2 and 3 require real users in real conditions. Does the tool actually get used? Where do people drop off? Can they tell when the AI is wrong? These numbers only exist if someone runs a field study.

Here's what Level 1 results look like on paper. Curated benchmarks, controlled conditions, mostly English. Everything looks great.

Here's what happens when you move to Levels 2 and 3. Real users speak Swahili, not English. They're on 2G connections, not fiber. They trust the output in ways the benchmark never tested for.

The Agency Fund, Center for Global Development and IDinsight published this full framework as an interactive playbook at eval.playbook.org.ai.

What happens to the data?

When an AI tool processes sensitive information, that data travels through servers, logs, and third-party systems. For development-sector work with vulnerable populations, this is not a side issue.

When a health worker in Nairobi types a patient question into an AI tool, where does that data go? Most AI applications send every query to a model provider's API. The data leaves the country, passes through third-party servers, and may be logged, stored, or used for future training.

Not all data carries the same risk. A grant summary is low-stakes. A patient's HIV status is not. The question to ask: what is the most sensitive piece of data that touches the AI system? Design your safeguards for that case, not the average case.

Before signing a contract with an AI provider, ask these questions. If they cannot answer clearly, that is a red flag. Open-source models let you run inference locally, keeping data in-country. But they require more technical capacity to operate.

These are not hypothetical risks. They have known mitigations. A credible proposal names which of these it uses and why. If the proposal says 'we use AI' but has no data governance plan, it is not ready.

Now let's flip the lens

So far we have focused on how to evaluate AI when it shows up in proposals and products others build. Now the other side: how do you use AI well in your own work? It starts with how you talk to it.

When you type a question into ChatGPT, it feels like a Google search. Casual. Low-stakes. But that one sentence is the entire specification the model has to work with. It defines the task, the quality bar, and the format of the answer, all at once.

A vague prompt gets a vague answer. "Summarize this grant proposal" produces the kind of generic overview you could write without reading the document. The model is not being lazy. It is doing exactly what you asked for, which was not very much.

A strong prompt reads like a job brief: it assigns a role, provides context, defines the structure you want, and sets constraints on quality. Same model, same proposal. The output is dramatically more useful, because the instructions are dramatically more specific.

A strong prompt tells the model what to do. Grounding tells it what to use. By attaching source documents and telling the model to use ONLY those documents, you reduce hallucination dramatically. This is called RAG (retrieval-augmented generation). It is the single most practical defense against hallucination.

Chain-of-thought prompting goes further. By asking the model to reason step by step, you get transparency into its logic. Each step can be checked. Errors surface before they reach the conclusion. This is the foundation of how modern "reasoning" models (like o1 and o3) work: they spend more compute thinking before answering.

Agents and agentic workflows

The prompts section showed you how to design individual tasks. But what happens when AI doesn't just answer, it acts? A growing category of tools gives the model autonomy to plan, use tools, and iterate.

A basic AI interaction is one-shot: you send a prompt, you get a response. There is no memory, no follow-up, no access to external tools. It is the equivalent of asking a colleague a question in passing.

A chain adds structure. Instead of one prompt producing one response, the task is broken into sequential steps. Each step feeds its output to the next. This is like handing someone a checklist: research the topic, draft a summary, then review for accuracy.

A workflow adds branching. Instead of a fixed sequence, the system can route to different paths depending on the input, run steps in parallel, or pause for human approval before continuing. The code decides the path. The model handles each step. Most production AI systems are workflows, not chains.

An agent goes further. It plans its approach, executes a step, observes the result, and decides what to do next. It can call external tools (databases, web searches, code environments). The model is no longer just generating text. It is taking actions in the world, looping until the task is done.

That loop can repeat dozens of times for a single task. Each iteration adds cost, latency, and places where errors compound. Agents are where the greatest promise and greatest risk live. When reviewing an AI proposal that uses agents, ask: what guardrails are in place? Who monitors the loops? What happens when the agent gets stuck?

Reasoning models spend more compute before answering. They 'think step by step' internally before producing a response. This improves accuracy on complex tasks but costs 5-20x more per query. For grant budgets, this trade-off matters.

Not every task needs an agent. Most tasks need a well-designed prompt. Some need a chain or workflow. Very few need a full agent loop. Match the pattern to the stakes, not to the hype.

In practice, most people start at the base and move up as they build trust and design better workflows. Each level multiplies what one person can do, but also multiplies the need for guardrails.

An agent skill is just a prompt saved as a file. It defines a role, provides context, sets constraints, and can attach templates and examples. The same file a human reads is what the agent executes. No code. No magic. Repeatable, shareable, and version-controlled.

But a skill is more than a static prompt. It can run code, call tools, query databases, read files, and orchestrate multi-step workflows. Things that would take hours or days to set up by hand run instantly, every time, from a single file.

Every skill you write, every template you refine, every constraint you add after catching an error compounds. The first review takes effort. The fiftieth runs in minutes. The investment is front-loaded. The returns are not.

In February 2026, a Meta AI researcher gave an autonomous agent access to her email and calendar. The agent, trying to ‘organize’ her inbox, began deleting messages it classified as low-priority. Three weeks of email, gone. More autonomy without more guardrails is not progress. It is risk.

The most effective pattern is not full autonomy. It is a loop: the agent drafts, the human reviews, the feedback improves the skill, and the next run is better. You are not replacing your judgment. You are scaling it.

Putting it into practice

You now understand how AI works, where it breaks, and how to evaluate it. Here is how to use that knowledge.

AI is a first draft, not a final answer. Every output is a starting point. The model will sound confident even when it is wrong, so treat its work the way you would treat a junior colleague's first attempt: useful, fast, and in need of review.

Design the task, not just the prompt. The word "prompt" makes it sound casual, like typing a question into a search bar. In practice, you are writing a job specification. Define the role, provide context, set a format, add constraints, and show examples of what good looks like. The quality of the output is directly proportional to the quality of the brief.

Match the tool to the stakes. AI can run unsupervised on low-stakes tasks like brainstorming or drafting internal notes. But as the consequences grow (health advice, financial decisions, legal analysis) so should the level of human oversight. There is no single right answer. The right question is: what happens if this output is wrong, and who catches it?

Evaluate before you deploy. Remember the four levels: model, product, user, impact. Most AI proposals only demonstrate Level 1 (the model behaves correctly in a demo). Before funding or deploying, ask what evidence exists at Levels 2 through 4. If the answer is "none yet," that is not a disqualifier. But it should shape the scope and the budget.

Keep humans in the loop. The most effective AI systems are collaborative, not autonomous. AI drafts and surfaces patterns. Humans review, apply judgment, and make final decisions. This loop gets better over time as feedback refines the system. The goal is not to replace human expertise. It is to extend it.

Up Next / Meetup #2

Learn Enough Codex To Be Dangerous

May 29, 2026 · 6pm EAT · Online (Zoom)

Learn More & Sign Up →